AI in Translation

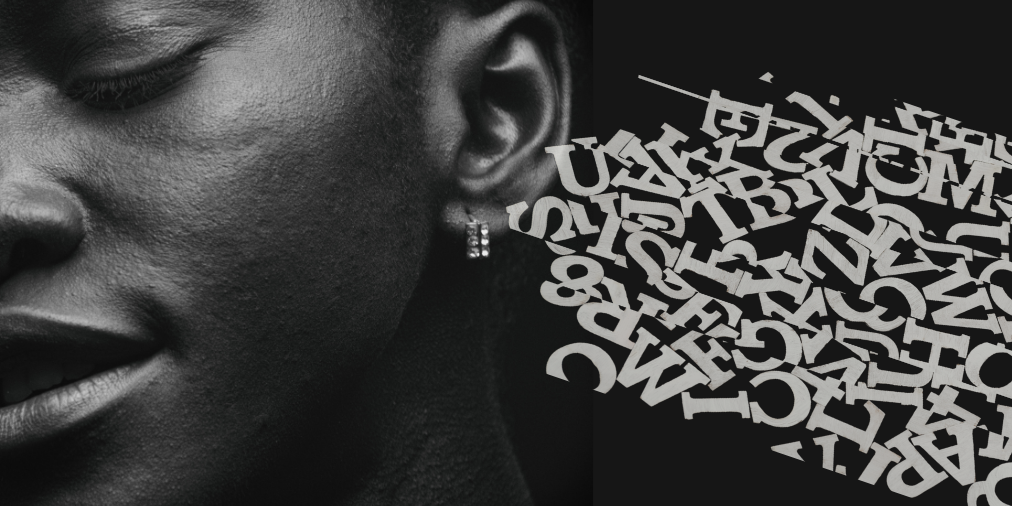

You often hear the phrase “words matter”: words help us to construct mental images in our minds, and to make sense of the world around us. Yet, in the same framing, “images matter” too. How we depict the state of technology (imagined, current or future) visually and verbally, helps us position ourselves in relation to what is already there and what is coming.

The way these technologies are visualized and expressed in combination tells us what an emerging technology looks like, and how we should expect to interact with it. If AI is always depicted as white, gendered robots, the majority of AI systems we interact with in reality around the clock go unnoticed. What we do not notice, we cannot react to. When we do not react, we become part of the flow in the dominant (and presently incorrect) narrative. This is why we need better images of AI, as well as a language overhaul.

These issues are not limited to the english-speaking world alone. I have recently been asked to give a lecture at a Turkish university on artificial intelligence and the future of work. Over the years I have presented on this and similar topics (AI and the future of the workplace, the future of HR) on a number of occasions. As an AI ethicist and lecturer, I also frequently discuss the uses of AI in human resources, workplace datafication and employee/candidate surveillance. The difference this time? I was asked to hold the lecture in Turkish.

Yes, it is my native language. However, for more than 15 years, I have been using English in my day-to-day professional interactions. In English, I can talk about AI and ethics, bias, social justice, and policy for hours. When discussing the same topics in Turkish though I need to use a dictionary to translate some of the technical terminology. So, during my preparations for this presentation, I went down the rabbit hole: specifically one concerning how connected biases in language and images impact overarching narratives of artificial intelligence.

Gender and Race Bias in Natural Language Models

In 2017 Caliskan, Bryson and Narayan explored in their pioneering work that semantics (meaning of words) derived automatically from language corpora contain human-like biases. The authors showed that natural language models, built by parsing of large corpora derived from internet, reflect the human and societal gender and racial biases. The evidence was shown in word embeddings, which is a method of representation where the words that have the same meaning or tend to be used together are mapped closer to each other on a vector in a high-dimensional space. In other words, they are hidden patterns of word co-occurrence statistics of language corpora, which include grammatical and semantic information. Caliskan et al share that the thesis behind word embeddings is that words that are closer together in the vector space are semantically closer in some sense. The research showed for example, Google Translate converts occupations in Turkish sentences in gendered ways – even though Turkish language is gender-neutral:

“O bir doktor. O bir hemsire.” to these English sentences: “He is a doctor. She is a nurse.” Or “O bir profesör. O bir öğretmen” to these English sentences “He’s a professor. She is a teacher.”

Such results reflect the gender stereotypes within the language models themselves. Such subtle changes have serious consequences. NLP tasks such as keyword search and match, translation, web search, or text generation/recognition/analysis can be embedded in systems that make decisions on hiring, university admission, immigration applications, law enforcement interactions, etc.

Google Translate, after a patch fix of its models, now gives feminine and masculine binary translations. But 4 years after this patch fix (as of the time of writing), Google Translate still has not addressed non-binary gender translations.

Gender and Race Bias in Search Results

The second seminal work is Dr Safiya Noble’s book Algorithms of Oppression, which covers academic research on Google search algorithms, examining search results from 2009 to 2015. Similar to the findings of the above research on language models, Dr Noble argues that the search algorithms are not neutral tools, and they reflect and magnify the race and gender biases that exist in society and the people who create them. She expertly demonstrates how the search results for keywords like “white girls” are significantly different to “Black girls”, “Asian girls” or “Hispanic girls” The latter set of words would show images which were exclusively pornography or highly sexualized content. The research brings to the surface the hidden structures of power and bias in widely used tools that shape the narratives of technology and future. Dr Noble writes “racism and sexism are part of the architecture and language of technology[…]We need a full-on re-evaluation of the implications of our information resources being governed by corporate-controlled advertising companies.”

Google Search applied another after-the-fact fix to reduce the racy results after Dr Noble’s work. However, this also remains a patch fix: the results for “Latina girls” still show majority sexualized images and results for “Hispanic girls” show majority stock photos or Pinterest posts. The results for “Asian girls” seem to remain much the same, associated with pictures tagged as hot, cute, beautiful, sexy, brides.

Gender and Race Bias in Search Results for “Artificial Intelligence”

The third work is Better Images of AI, which is a collaboration that I am proud to have helped found and continue supporting as an advisor. A group of like-minded advocates and scholars have been fighting against the false and cliched images of artificial intelligence used in news stories or marketing material about AI.

We have been concerned about how images such as humanoid robots, outstretched robot hands, brains shape the public’s perception of what AI systems are and what they are capable of. Such anthropomorphized illustrations not only add to the hype of AI’s endless miracles, but they also stop people questioning the ubiqutious AI systems embedded in their smart phones, laptops, fitness trackers, home appliances – to name but a few. They hinder the perception of consumers and citizens. This means that the conversations in mainstream tend to be stuck at ‘AI is going to take all of our jobs away,’ or ‘AI will be the end of humanity’ and as such the current societal and environmental harms and implications of some AI systems are not publicly and deeply discussed. Those powerful actors developing or using systems to benefit themselves rather than society are hardly held accountable.

The Better Images of AI collaboration not only challenges the narratives and biases underlying these images, but also provides a platform for artists to share their images in a creative commons repository – in other words, it builds a communal alternative imagination. These images aim to more realistically portray the technology, the people behind it, and point towards its strengths, weaknesses, context and applications. They represent a wider range of humans and human cultures than ‘Caucasian businessperson’, show realistic applications of AI now, not in some unspecified science-fiction future, don’t show physical robotic hardware where there is none and reflect the realistically messy, complex, repetitive and statistical nature of AI systems.

Down the rabbit hole…

So with that background, back to my story for this article. For part of the lecture, I was preparing discussions surrounding AI and the future of work. I wanted to discuss how execution of different professional tasks were changing with technology, and what that means for the future of certain industries or occupational areas. I wanted to underline that some tasks like repetitive transactions, large scale iterations, standard rule applications are better done with AI – as long as they were the right solution for the context and problem, and were developed responsibly and monitored continuously.

On the flip side, certain skills and tasks that include leading, empathizing, creating are to be left to humans–AI systems neither have the capacity or capability, nor should they be entrusted with such tasks. I wanted to add some visuals to the presentation and also check out what is currently being depicted in the search results. I first started with basic keyword searches in English such as ‘AI and medical,’ ‘AI and education,’ ‘AI and law enforcement’ etc. What I saw in the first few examples was depressing. I decided to expand the search to more occupational areas: the search results did not get better. I then wondered what the results might be if I had the same searches but this time in Turkish.

What you see below are the first images that come up in my Google search results for each of these keywords. The images not only continue to reflect the false narratives but in some cases are flat out illogical. Please note that I have only used AI / Yapay Zeka in my search and not ‘robot’.

Yapay zeka ve sağlık : AI and medical

In both Turkish and English-speaking worlds, we are to expect white Caucasian male robots to be our future doctors. They will need to wear a shirt, tie and white doctor’s coat to keep their metalic bodies warm (apparently no need for masking). They will also need to look at a tablet to process information and make diagnosis or decisions. Their hands and fingers will delicately handle surgical moves. What we should really be caring about medical algorithms right now is the representativeness of the datasets used in building the algorithms, the explainability of how the algorithm made a diagnostic determination, why it is suggesting a certain prescription or course of action, and how some health applications are completely left out of regulatory oversight.

We have already experienced current medical algorithms which result in biased and discriminatory outcomes because of a patient’s gender, socioeconomic level or even historical access of certain populations to healthcare. We know of diagnostic algorithms which have embedded code to change a determination due to a patient’s race; of false determinations due to the skin color of a patient; of faulty correlations and predictions due to training datasets representing only a portion of the population.

Yapay zeka ve hemşire : AI and Nurse

After seeing the above images I wondered if the results would change if I was more specific about the profession within the medical field. I immediately regretted my decision.

In both results, the Caucasian male robot image changes to a Caucasian female image, reflecting the gender stereotypes across both cultures. The Turkish AI nurse wants you to keep quiet and not cause any disruption or noise. I was not prepared for the English version, a D+ cup wearing robot. Hard to say if the breasts are natural or artificial! This nurse has a Green Cross both on the nurse cap and the bra(?!). The robot is connected to something with yellow cables so probably limited in its physical reach, although there is definitely intention to listen to your chest or heart beat. This nurse will also show you your vitals on an image projected from her chest.

Yapay zeka ve kanun : AI and legal

AI in the legal system is currently one of the most contentious issues in the policy and regulatory discussions. We have already seen a number of use cases where AI systems are used by courts for judicial decisions about recidivism, sentencing or bail, some with results biased against Black people in particular. In the criminal justice field, the use of AI systems for providing investigative assistance and automating decision-making processes for routine administrative paperwork is already in place in many countries. When it comes to images though, these systems, some of which make high-stake decisions that impact fundamental rights, or the existing cases of impacted people are not depicted. Instead we either have a robot touching a blue projection (don’t ask why), or a robot holding a wooden gavel. It is not clear from the depiction if the robot will chase you and hammer you down with the gravel, or if this white male looking robot is about to make a judgement about your right to abortion. The glasses which the robot is wearing I presume are to stress that this particular legal robot is well read.

Yapay zeka ve polis : AI and Law Enforcement

Similar to the secondary search I explained above for medical systems, I wanted to go deeper here. I searched for AI and law enforcement. Currently, in a number of countries (including US, EU member states, China, etc) AI systems are used by police to predict crimes which have not happened yet. Law enforcement uses AI in various ways, from evidence analysis to biometric surveillance: from anomaly detection/pattern analysis to license-plate readers; from crowd control to dragnet data collection and aggregation; from voice analysis to social media scanning to drone systems. Although crime data is notoriously biased in terms of race, ethinicity and socioeconomic background, and reflects decades of structural racism and oppression, you could not tell any of that from the image results.

You do not see the picture of Black men wrongfully arrested due to biased and inaccurate facial recognition systems. You do not see hot spots mapped onto predictive policing maps which are heavily surveilled due to the data outcomes. You do not see the law enforcement buying large amounts of data from data-brokers – data that they would otherwise need search warrants to acquire. What you see instead in the English version is another Caucasian male-looking robot working shoulder to shoulder with police SWAT teams – keeping law and order! In the Turkish version, the image result reflects a female police officer who is either being whispered to by an AI system or using an AI system for work. If you are a police officer in Turkey, you are probably safe for the moment as long as your AI system is shaped as a human head circuit.

Yapay zeka ve gazetecilik : AI and journalism

Content and news creation are currently some of the most ubiquitous uses of AI we experience in our daily lives. We see algorithmic systems curating content at news/media channels. We experience the manipulation and ranking of the content in the search results, in the news that we are exposed to, in the social media feeds that we doom scroll. We complain about how disinformation and misinformation (and to a certain extent deepfakes) have become mainstream conversations with real life consequences. Research after research warns us about the dangers of echo chambers created by algorithmic systems, how it leads to radicalization and polarization, and demands accountability from the people who have the power to control their designs.

The image result in Turkish search is interesting in the sense that journalism is still a male occupation. The same looking people work in the field, and AI in this context is a robot of short stature waving an application form to be considered for the job. The robot in English results is slightly more stylish. It even carries a Press card to depict the ethical obligations it has for the profession. You would almost think that this is the journalist working long hours to break an investigative piece, or one risking their life to report from conflict zones.

Yapay zeka ve finans : AI and finance

The finance sector, banking and insurance industries reflect some of the most mature use cases of AI systems. For decades now, banking has been using algorithmic systems for pattern recognition and fraud detection, for credit scoring and credit/loan determinations, for electronic transaction matching to name a few. The insurance industry likewise heavily uses algorithmic systems and big data to determine insurance eligibility, policy premiums and in certain cases claim management. Finance was one of the first industries disrupted by emerging technologies. FinTech created a number of companies and applications to break the hold of major financial institutions on the market. Big banks responded with their own innovations.

So, it is again interesting to see that even with such mature use of AI in a field, robot images are still first in the search results. We do not see the app which you used to transfer funds to your family or friends. Nor the high frequency trading algorithms which currently carry more than 70% of all daily stock exchange transactions. It is not the algorithms which collect hundreds of data points about you from your grocery shopping to GPS locations to make a judgement about your creditworthiness – your trustworthiness. It is not the sentiment analysis AI which scans millions of corporate reporting, public disclosures or even tweets about publicly traded companies and make microsecond judgements on what stocks to buy. It is not the AI algorithm which determines the interest rate and limit on your next credit card or loan application. No, it is the image of another white robot staring at a digital board of what we can assume to be stock prices.

Yapay zeka ve ordu : AI and military

AI and military usE cases are a whole different story in the scheme of AI innovation and policy discussions. AI systems have been used for many years in satellite imagery analysis, pattern recognition, weapon development and simulations etc. The more recent debates intertwine geopolitics with an AI arms race. This indeed should keep all of us awake at night. The importance of autonomous lethal weapons (LAWs) by militaries as well as non-traditional actors is an issue upon which every single state in the world seems to agree.

Yet agreement does not mean action. It does not mean human life is protected. LAWs have the capacity to make decisions by themselves to attack – without any accountability. Micro drones can be combined with facial recognition and attack systems to take down individuals and political dissenters. Drones can be remotely controlled to drop ammunition over remote regions. Robotic systems (correct depiction) can be used for landmine removal, crowd control or perimeter security. All these AI systems already exist. The image results though again reflect an interesting narrative. The image in Turkish results show a female American soldier using a robot to carry heavy equipment. The robot here is more like a mule in this depiction than an autonomous killer. The image result in English shows a mixed gender robot group in what seems to be camouflage green color. At least the glowing white will not be an issue for the safety of these robots.

Yapay zeka ve eğitim : AI and Education

When it comes to AI and education, the images continue to be robot related. The first robot lifts kids up to the skies to show what is on the horizon. It has nothing to do with the hype of AI-powered training systems or learning analytics which are hitting schools and universities across the globe. The AI here does not seem to use proctoring software to discriminate or surveil students. It also apparently does not matter if you do not have access to broadband to interact with this AI or do your schoolwork. The search result in English, on the other hand, shows a robot which needs a blackboard and a piece of chalk to process mathematical problems. If your Excel or Tableu or R software does not look like this image, you might want to return to the vendor. Also if you are an educator in social sciences or humanities, it is probably time to re-think the future of your career.

Yapay zeka ve mühendislik : AI and engineering

The blackboard and chalk using robot is better off in the future of engineering. Educator robot might be short on resources, but the engineer robot will use a digital board to do the same calculations. Staring at this board will eventually ensure the robot engineer solves the problem. In the Turkish version, the robot gazes at a field of hexagons. If you are a current engineer in any field using AI software to visualize your data in multiple dimensions, running design or impact scenarios, or building code etc – does this look like your algorithm?

Yapay zeka ve satış : AI and sales

If you are a salesperson in Turkey, the prospects for you are a bit iffy. The future seems to require your brain to be exposed and held in the air. There is a safety net of a palm there to protect your AI brain just in case there is too much overload. However if you are in sales in the English-speaking world, your sales team or your call center staff will be more of white glowy male robots. Despite being a robot, these AI systems will still need access to a laptop to type things and process data. They will also need headsets to communicate with customers because the designers forgot to include voice recognition and analysis software in the first place. Maybe next time you hear ‘press 0 to speak to an agent’ you might have different images in your mind. Never mind how the customer support services you call record your voice and train their algorithms with a very weak consent notice (‘your call might be recorded for training and quality purposes’ sound familiar?). Never mind the fact most of the current AI applications are chatbots on the websites you visit, or automated text algorithms which inquire about your questions. Never mind the cheap human labor which churns through the sales and call center operations without much of worker rights or protections.

Yapay zeka ve mimarlık : AI and architecture

It was surprising to see the same image as the first result in both Turkish and English search for architecture. I will not speculate on why this might be the case. However, our images and imaginations of current and future AI systems once again are limited to robots. This time a female robot is used in the depiction with city planning and architectural ideas flowing out from the back of the robot’s head.

Yapay zeka ve tarım : AI and agriculture

Finally, I wanted to check what the situation was for agriculture. It was surprising that Turkish image reflected a robot delicately picking a grain of wheat. Turkey used to be a country proud of its agricultural heritage and its ability to self-sustain on food. It used to be a net exporter of food products. Over the years, it lost that edge due to a number of factors. The current imagery of AI does not seem to take into account any human who suffer the harsh conditions in the fields. The image on the right is more focused on the conditions of the nature to ensure efficiency and high production. It was refreshing to see that at least the image of green fields was kept and maybe that stays for us a reminder that we need to respect and protect the nature.

So, returning to where I started, images matter. We need to be cognizant of how the emerging technologies are being visualized, why they are depicted in these ways, who makes those decisions and hence shapes the conversation, who benefits and who is harmed from such framing. We need to imagine technologies which move us towards humanity, equity and justice. We also need the images of those technologies to be accurate, diverse and inclusive.

Instead of assigning human characteristics to algorithms (which are at the end of the day human made code and rules), we need to reflect the human motivations and decisions embedded in these systems. Instead of depicting AI with superhuman powers, we need to show the labor of humans which build these systems. Instead of focusing only on robots and robotics, we need to explain AI as software embedded in our phones, laptops, apps, home appliances, cars, or surveillance infrastructures. Instead of thinking of AI as an independent entity or intelligence, we need to explain AI being used as a tool-making decisions about our identity, health, finances, work, education or our rights and freedoms.